Lemons

A lemon is attractive to the eye and bitter to the taste. In American slang, a "lemon" is a product that looks good on the outside but is broken on the inside — a clunker. In 1970, Akerlof used the metaphor to explain how markets collapse when buyers cannot distinguish quality. Today, nearly every digital product presents itself as "AI-powered." The signal shines. What's inside is harder to know.

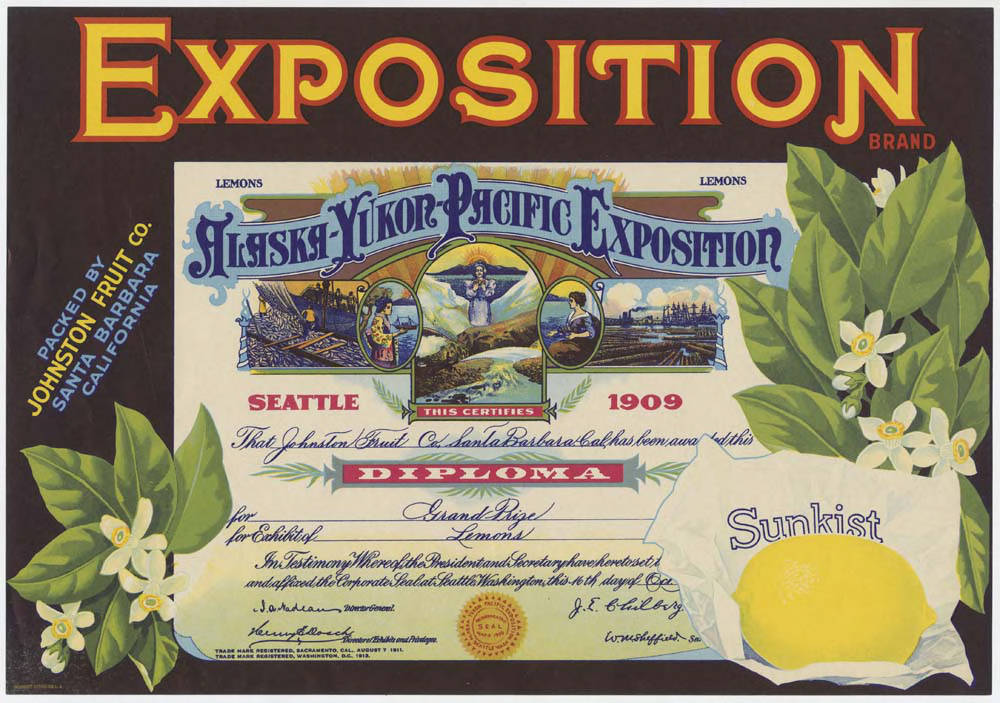

Exposition Brand Sunkist lemons crate label, c. 1912. California citrus growers competed through label design because the fruit inside each crate was indistinguishable. Museum of History and Industry, Seattle.

A lemon is attractive to the eye. Bright yellow, smooth skin, a shape that fits in the hand. Nothing about its exterior warns of what happens when you bite into it. The bitterness is invisible from the outside.

In American slang, lemon is the word for a product that looks good but is broken inside. A clunker. A used car with gleaming paint and an engine about to give out. The metaphor is precise: what defines a lemon is not that it is bad, but that you cannot know it before trying. The exterior does not reveal the interior.

In 1970, George Akerlof turned that everyday intuition into a paper that would earn him the Nobel Prize. In The Market for "Lemons", published in the Quarterly Journal of Economics, he started from an observation about the used car market. A seller who has meticulously maintained his car knows it is good. But the buyer cannot know that. From the outside, a well-maintained car and a mechanical disaster look the same. Facing this uncertainty, the buyer offers a price that reflects the average quality in the market. The seller of the good car, who knows his vehicle is worth more, walks away. The bad ones remain. Average quality drops. The buyer adjusts downward. And so, in a descending spiral, the market fills with lemons and good products disappear.

The mechanism is information asymmetry: one party knows something the other cannot verify. Akerlof showed that this asymmetry alone can destroy entire markets. No fraud is required. No bad faith. It is enough that the buyer cannot tell the difference.

Now look around. At some point over the past two years, almost every digital product you know added the word "AI" to its description. A CRM that had been running on conventional business rules for eight years became "AI-powered." A project management tool that had always sorted tasks by manual priority now did it "with artificial intelligence." Some declare themselves "AI native" despite having been on the market for years — native implies they were born that way, but if your product existed in 2019 and made no mention of artificial intelligence, you are not native to anything. You are a convert.

The interesting question is not whether they are lying — many are not lying, they have integrated real capabilities — but why they need to say it so urgently. What exactly are they signaling, and to whom?

The market clouds over

Erlei and colleagues have applied this framework to the AI systems market, and what they find is exactly what the theory predicts. In When Life Gives You AI, Will You Turn It Into A Market for Lemons? (2026), they show that the asymmetry between those who develop AI systems and those who buy them is structural. The buyer cannot evaluate the real quality of an AI system before purchasing and integrating it into their context. Demos are controlled. Benchmarks measure performance under laboratory conditions. Case studies are cherry-picked. The seller knows the limitations — the hallucinations, the biases, the edge cases where the system fails — and the buyer does not.

Stigler formalised in 1961 that searching for information has a cost: comparing, evaluating, testing. In The Economics of Information, he showed that rational buyers stop searching when the cost of one more inquiry exceeds the expected benefit. In the AI market, that search cost is enormous. Evaluating whether a model works well for your specific use case requires technical expertise, extended pilots, integration with your data. Most organisations cannot afford it. So they do what any rational agent would do under those conditions: they choose the one that presents best.

The signal

In 1973, Michael Spence proposed an elegant solution to Akerlof's problem. In Job Market Signaling, he observed that in labour markets, where the employer cannot know in advance whether a candidate is good, candidates use signals to distinguish themselves. The classic signal is education. A university degree does not necessarily work because it teaches relevant skills. It works because obtaining it is costly — in time, effort, money — and it is more costly for those who lack the ability it signals. It is a separating signal: it distinguishes types precisely because not everyone can emit it.

The key to Spence's model is that a signal only works if it is sufficiently costly for low-quality actors. If it were free, everyone would emit it and it would distinguish nothing.

Applied to AI: at first, implementing artificial intelligence in a product was a genuinely costly signal. It required research teams, proprietary data, training infrastructure. Only companies that truly invested could do it. The signal separated. But from 2023 onwards, the cost of "having AI" collapsed. Language model APIs made it possible for any product to add a chatbot, an assistant, a summarisation or text generation feature with weeks of integration work. The signal became cheap.

When a signal is cheap to produce, it stops separating. In Spence's terminology, we move from a separating equilibrium — where the signal distinguishes good from bad — to a pooling equilibrium, where everyone emits the same signal and the market cannot distinguish quality. It is exactly what we observe: everyone is "AI-powered," and that makes the label meaningless.

The familiar cycle

This has happened before. In the late 1990s, companies of all kinds added ".com" to their names. Pets.com, Webvan, Kozmo.com. Pet food companies, supermarkets, couriers — businesses that existed before the internet and would continue to exist after it — redefined themselves as internet companies. The signal "we are a .com company" attracted investors, talent, and attention. Until it stopped separating, because everyone was emitting it, and the market discovered that many of those companies were nothing more than conventional businesses with a new domain.

A decade later, the cycle repeated with "cloud." Products running on their own servers declared themselves "cloud-based." Then with "mobile-first": applications that were not mobile-first but claimed to be. Each technological wave follows the same pattern: a genuine innovation generates a valuable signal → the signal attracts investment and attention → the cost of emitting the signal drops → everyone emits it → the signal becomes noise → the market needs a new separating signal.

The pattern is so regular it should concern us. Because what it describes is not an information failure that can be corrected with transparency. It is a structural property of how markets process technological novelty.

The intelligent lemon

Let us return to Akerlof. When the "AI" signal stops distinguishing quality, the buyer finds themselves in the position of the used car buyer: unable to separate good products from bad. They offer an average price. Good products are undervalued. Bad ones, overvalued. Sellers with genuinely good AI are frustrated: they have invested in real capability but the market does not reward them because the label is worthless.

The most interesting finding from Erlei is that there is a partial way out: disclosure of limitations. Sellers who openly admit their system's weaknesses — where it fails, what it cannot do, in which contexts it performs poorly — generate better market outcomes than those who present flawless demos. It seems counterintuitive, but the logic is purely Spencean: admitting limitations is costly for bad products (because they have far more to reveal) and relatively cheap for good ones. It is a new separating signal.

But this is where Herbert Simon enters the conversation. In Designing Organizations for an Information-Rich World (1971), Simon observed that a wealth of information creates a poverty of attention. In a market where everyone says "we are AI" and some add transparency reports and performance audits and limitation documentation, the problem shifts: it is no longer that there is no information. It is that there is no attention to process it. The buyer, who could not evaluate quality before, now cannot evaluate the quality of the evaluations either.

In an information-rich world, the wealth of information means a dearth of something else: a scarcity of whatever it is that information consumes. What information consumes is rather obvious: it consumes the attention of its recipients.

— Herbert Simon, Designing Organizations for an Information-Rich World (1971)

The evaluated evaluator

There is something that distinguishes this wave from previous ones.

".com" could not evaluate whether a company was truly digital. "Cloud" could not audit whether a service actually ran in the cloud. They were inert labels. But AI is a technology that, at least in theory, could be used to evaluate the very claims made about AI. A system that analyses benchmarks, compares real performance against stated performance, audits demos against production behaviour.

The paradox is that the evaluator would be of the same kind as the evaluated. And in a market where information about quality is already asymmetric, introducing an opaque intermediary does not solve the problem — it displaces it one level up. Who evaluates the evaluator? With what quality signals?

Arrow, in The Economics of Information, formalised the fundamental paradox of information goods: you cannot know how much information is worth until you have it, but once you have it you no longer need to buy it. AI systems are information goods par excellence — experience goods, in Shapiro and Varian's terminology: you do not know if they work until you use them in your context, but you cannot use them without first investing in them.

If the "AI" label stops distinguishing quality — as ".com" stopped distinguishing it in 2001 — what will be the next separating signal? Or are we facing a deeper asymmetry, where the complexity of the object being evaluated means no signal can remain separating long enough?

Information economists have been mapping this territory for sixty years. Stigler described the cost of searching. Arrow described the paradox of valuing what you do not know. Akerlof described how markets collapse when you cannot tell the difference. Spence described how costly signals restore separation. Simon described why, even with all the information in the world, attention is finite.

What they did not describe — what perhaps cannot be described from within economics — is why each new technology repeats the same sequence, as if the market had no memory. Or as if memory were not enough.